This is my talk from NixCon 2022, converted with minimal changes into blog post format.

What?

NixOS is an operating system based on the Nix package manager and Linux. Linux is an operating system kernel, which means it runs on hardware and mediates access to this hardware by the user space – providing abstractions to allow applications and services to care less about what exact hardware they’re running on, and allocating resources to allow multiple processes to run at once.

I’m going to talk about how we get from a bootloader to a running operating system, then compare how “traditional” Linux distros and NixOS fit the pieces together, and then showcase some of the possibilities that NixOS puts within easy reach.

Booting Linux

When you power on your computer, a number of things happen. The first that we’re interested in is the boot loader: it’s what’s responsible for loading both Linux itself and giving it the startup parameters needed for getting the user space up and running.

Some widespread boot loaders include GRUB, systemd-boot, and, especially in the non-desktop world, U-Boot.

The aforementioned startup parameters are usually the command line and the initramfs. The command line can configure behaviours of the kernel that might be relevant before any userspace can configure them, such as logging behaviour, how to respond to crashes, or what to run as user-space.

The initramfs is a bit more interesting for our purposes…

Initramfs

The initramfs (abbreviation of “initial RAM filesystem”) is where userspace starts. It’s a tree of files – traditionally stored as a disk image (initial ram disk, which is where the common but no longer pedantically accurate name initrd comes from), but nowadays it’s usually a CPIO archive.

It contains everything the system needs to boot. The kernel will run a program, often just called “init”, from the initramfs as the first user-space process.

The role of the initramfs is usually to get everything ready for the “real” system.

Modern desktop kernels are usually modular, meaning that they don’t include drivers for all supported hardware directly, instead loading them as modules later on. This makes the kernel significantly smaller and faster to load. However, this includes drivers for storage devices and filesystems which are needed to load the final system, so part of the initramfs’s job is to include and load the kernel modules which provide these drivers.

It may also do some other work, like obtaining encryption keys from the user or a TPM to unlock an encrypted root filesystems, or setting up networking in case the root filesystem is on the network or the user wants to enter encryption passwords via the network.

Once it’s done these things, it will usually mount the root filesystem and pass control on to the next “init”, that of the final system.

Real root filesystem

The “init” in the root filesystem then starts up the rest of userspace. On a typical desktop system, this includes services like clock synchronisation and network management. One of the “services” started is also a login service: both the login prompts you get on text consoles, and the display manager which allows logging into graphical sessions.

Fitting the pieces together

“conventional distros” (example: Debian)

The kernel is a package managed by the system package manager. It includes both the kernel itself (which is what the bootloader loads) and the modules it needs to support a wide range of hardware. The package includes post-installation scripts which the other parts of the boot chain can hook into, in order to reconfigure themselves when a new kernel is installed or an old one is removed.

The initramfs-tools package handles generation of the initramfs. It

installs a kernel post-installation hook which will generate an

initramfs for the given kernel. Initramfs generation collects

information about the installed system to work out what the

initramfs should contain.

The things it looks at include for example /etc/fstab, which lists the

root filesystem and any others that should be mounted, and

/etc/crypttab, which lists encrypted storage devices.

It then copies the contents (kernel modules, init scripts, the init scripts’ dependencies, and configuration files) into a temporary directory and generates an archive – the finished initramfs – from it.

The boot loader is also a package managed by the system package manager. The package contains both the boot loader and scripts for installing it to the hard drive and for generating its configuration.

The bootloader installation script is usually only run when the bootloader package is installed or updated.

The bootloader configuration scripts, which define boot options (that is, kernel/commandline/initramfs combinations), are added as kernel post-installation hooks so that they run and provide a new boot option whenever a new kernel is installed.

Both the initramfs tools and the boot loader can be configured via a

multitude of files in /etc.

NixOS

Let’s have a look at how NixOS puts the pieces together. You may want to refer to the video of the talk in order to consume this section in meme format.

One key concept of NixOS is the system package, or toplevel: the entire system configuration is built into a single package which depends on everything the system needs. Let’s see how the components fit into it.

/run/current-system -> /nix/store/[...]-nixos-system-geruest-22.05-20221014-78a37aaIn a running NixOS system, there’s a symlink at /run/current-system

which points to the system package. This package is a directory

populated mostly with further symlinks.

kernel -> /nix/store/[...]-linux-6.0/bzImageOne of these points to the kernel image. Often, the bootloader cannot load it directly from the root filesystem and needs it copied to a boot partition instead. Exposing it here makes this process easier.

kernel-params -> "mem_sleep_default=deep consoleblank=60 acpi_osi="Windows 2020" loglevel=4"The kernel command line is a simple text file. Here we see some parameters which configure the kernel to work better with the hardware I’m running on, as well as the log level.

initrd -> /nix/store/[...]-initrd-linux-6.0/initrdThe initramfs is symlinked. NixOS uses the historic and technically inaccurate name initrd. Like the kernel, this may have to be copied to a boot partition.

nixos-version -> "22.05-20221014-78a37aa"A further text file contains the NixOS version string. This is nice for generating a more informative boot menu.

initThe init script – this is the root filesystem one, which the initramfs hands over to. This does a little setup before starting systemd, NixOS’s service manager, because systemd won’t start successfully if its config is not yet in place.

bin/switch-to-configuration

The switch-to-configuration script takes some steps to apply a NixOS

configuration – this is what nixos-rebuild calls once it’s finished

building the system package. What exactly it does depends on the

argument given.

If passed switch or test, it will measure the current state of the

system and determine what needs to be done to move to the

configuration defined by this system package. It then takes these

steps in order to activate the system.

Another thing the switch action does, which is also performed by the

boot action, is to run the boot loader configuration script,

which looks at other generations of the system as well and generates

boot loader config for all of them by looking at the files mentioned

above.

The switch and boot actions may also install the boot loader, but

this only happens when a particular argument is

passed. nixos-install passes this argument so that installing a

system also installs its boot loader.

So we bring all these things together in a single system package, and multiple system packages can coexist. This is what enables boot-time rollback of config that goes well beyond just the kernel, while on Debian we have a single initramfs per kernel which needs to be regenerated when config relevant to it changes – and the only boot-time choice we have is which kernel to use.

What’s next?

That concludes our whirlwind tour of how a classic desktop NixOS installation boots.

But what sets NixOS apart – besides of course declarative configuration, parallel installation of multiple systems, parallel installation of multiple versions of the same package, safe per-user package management, rollbacks, easy and fearless patching, reproducibility, and all that, is its versatility.

What if, for example, we were to just put the entire NixOS system in an initramfs?

netboot

The module that implements this is called netboot, because that is what it was originally implemented for. Instead of generating an initramfs which has the necessary bits and bobs to mount a local file system, it generates an initramfs which contains the entire system with all of its dependencies. This is an easy way to boot the whole system from RAM, and is useful for netboot – where a boot loader like iPXE will download the kernel and initramfs via the network, then boot it without needing to touch local persistent storage.

This is quite heavy on RAM usage, since the system and all its dependencies are loaded into RAM. The system on my laptop is 5.4GiB in size, so I might not want to have it loaded into RAM at all times.

Of course, while the system doesn’t need local persistent storage, it can still make use of it! For example, a swap partition can be used to allow swapping out unused RAM (such as all the software that’s not running) to make physical memory available for things that actually need it.

Or the initramfs system can install NixOS to the local storage! This can be really useful if you want to provision a whole fleet of desktop or server machines, or if using a USB stick installer is just not enough fun. I’ve been in both situations.

As an aside, nowadays the netboot image does not embed the entire system directly into the initramfs. Instead, it embeds a squashfs image, which can often compress the filesystem more efficiently than a CPIO archive, and allows keeping it compressed in RAM, unlike the initramfs which is unpacked at boot time.

There’s also an open-source project by Determinate Systems called nix-netboot-serve. Instead of constructing one big initramfs image ahead of time within a Nix derivation, it puts them together from the constituent store paths on-demand. The end result is very similar, but allows much speedier iteration since each store path is cached and only changed parts need to be recompressed.

netboot is great and all, but why limit yourself to booting it via iPXE? Linux has a nifty little piece of functionality that allows it to act as a bootloader itself, called kexec.

switch-root

(I go into more detail on this approach in a previous post)

Let’s go back to our normal initramfs. The init script in there uses the tells the kernel to swap some mountpoints around in order to replace the current root filesystem with a new one.

But why limit ourselves to doing that from an initramfs? systemd exposes a command that allows shutting down the running user space, but instead of powering the machine off or rebooting, this command will do exactly what the initramfs did! I’m sure it was originally intended for systems where systemd is used as the initramfs init, but why shouldn’t we use it outside our initramfs as well?

For example, you can build a NixOS system and copy it to a tmpfs, then switch-root into that tmpfs, to boot into NixOS from another distribution without leaving any traces (besides shell history) behind.

I’ve also used it to put an Ubuntu installation on my laptop without letting it touch the bootloader, without having to repartition the disk, and without having to mess around to get zfs support in Ubuntu – since I was still running NixOS’s kernel!

The reuse of the kernel makes this approach applicable in scenarios where kexec is infeasible, such as if the kernel doesn’t implement it, or it doesn’t work on the hardware you have.

Your mileage may vary for anything that relies on the kernel here, since the system you’re switching into is very likely to have an incompatible set of kernel modules, which won’t be loadable. This means that hotplugging devices whose drivers weren’t already loaded or which need firmware files not provided by the target system will fail, for instance.

This approach is a bit more versatile than anything purely initramfs-based since it can use real filesystems.

Custom images

NixOS’s own installer images are built from full NixOS configurations. Like the netboot module, the ISO image module copies the system package into a squashfs. It then takes the squashfs, the kernel, a specialised initramfs, a boot loader with appropriate config, and the necessary bits and bobs for BIOS and UEFI to recognise it as bootable, and bundles them together into an ISO image which you can write to a USB stick or, if you’re feeling nostalgic, to optical media.

Single-board computers often boot from SD cards. NixOS has the tooling for building SD images as well, since that’s the workflow users coming from other distributions have come to expect. Generating non-installer images is a bit of an antipattern for a number of reasons (hit me up on Matrix if you want to hear me rant about it), but it can be very useful nonetheless.

Disk/VM/cloud images

If you’re not working with hardware you can touch, VM images can be very handy! You can generate a base image preconfigured with your SSH keys and standard tools to enable quickly spinning up new VMs (clone image, boot image), or you can configure the services you want the VM to provide, build an image from there, and boot that without any further configuration or deployment steps.

At Determinate Systems, we’ve been working on a tool called Ephemera, which works similarly to nix-netboot-serve: instead of building a disk image inside a Nix derivation, it builds a NixOS system package into a disk image outside the sandbox. This enables caching some parts common to multiple images and greater parallelisation, and brings image build times down to only a few seconds. The images are stateless, so it is expected that if the configuration needs to be altered, the image will be replaced. After all, it’s cheap to build!

Combined with the AWS snapshot upload tool coldsnap, this allows going from a NixOS configuration to an AMI faster than any other toolset for building AMIs known to us!

But Ephemera is by no means limited to AWS. It should be usable on any cloud provider that allows uploading custom disk images, and has also been tested on local QEMU VMs and on hardware.

We’re very excited about it, but it’s currently still in its early stages and we’re running a closed alpha for user testing. Ask us if you’re interested!

NixOS VM scripts and NixOS tests

Even with Ephemera, working with disk images isn’t always the most convenient way.

Since a NixOS system package contains everything you need to boot it, it’s also pretty easy to run a NixOS VM on a host that has Nix installed – without any image generation! QEMU can boot a kernel and initramfs directly, and it has a built-in file sharing implementation that allows sharing the host’s Nix store to the guest read-only, and booting directly from the file share. NixOS allows generating scripts which take care of the tedious parts of building the QEMU commands for this. This is great for manually testing configuration changes locally without deploying them to a persistent staging system or building images.

I use these VMs to try out nixpkgs changes for reviews, to test out software I’m not yet sure I want to use, and I’ve also experimented with using them for fully-scripted NixOS installs onto physical media – being able to shut the VM down afterwards makes cleanup much simpler.

However, this VM infrastructure is also used for what I believe is one of NixOS’s most impressive features: the full-system VM-based integration testing. It runs QEMU VMs, or even networks of them, inside Nix builds to verify a huge range of functionality provided by NixOS.

We have tests for a wide variety of installation setups, which boot the ISO to install on various combinations of LVM and LUKS as well as various filesystems; the NixOS channels will not update if these tests are broken, which allows us to make changes to the installers and filesystem support with confidence that we won’t break it for everyone, or at least will usually find out before being confronted with disgruntled users.

We also have tests for many NixOS modules; the Nextcloud tests ensure that each of the 3 supported Nextcloud versions works with 4 different database configurations; our networking tests ensure that a client can talk to an external network through a router with NAT (also a NixOS VM!) and that our firewall works correctly.

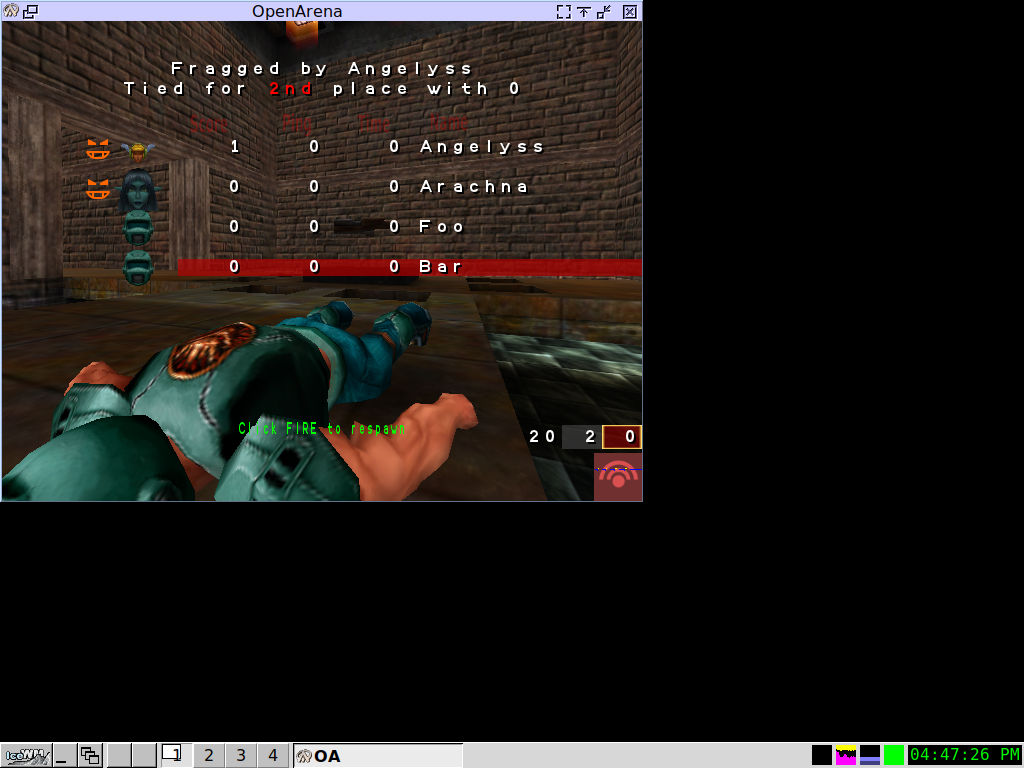

We even have an OpenArena test, which pulls up a network of VMs and runs a game server on one and bot clients on the others, taking screenshots of the action:

All this runs within the Nix sandbox: so these tests are completely independent of online services and won’t break from one day to the next due to external circumstances.

NixOS makes heavy use of them, but I’m sure there are many use cases outside Nixpkgs that would be well served by the NixOS test infrastructure.

Beyond NixOS

NixOS isn’t suitable for all machines that can run Linux, but that doesn’t mean we can’t give them the Nix treatment! Thanks to nixpkgs’s cross-compilation support and some kernel patches cribbed from OpenWRT, I run a small Linux system built with Nix on my WiFi access point. It’s a little kernel and initramfs which I currently boot via the network through U-Boot’s TFTP support. The init is just a shell script that starts the necessary bits to supply network connectivity and dropbear for SSH access – which I haven’t really needed since implementing it, since it just works!

Daniel Barlow has gone a little further with the concept (and his work predates mine) and written NixWRT (though he’s currently rewriting it), which supports a wider range of devices and powers his whole home network, router and all.

samueldr also has an interesting project going on around building embedded Linux systems with Nix, named celun. It currently builds systems for various obscure gaming handhelds, as well as the Tow-Boot installer.

I think there’s a lot of space to be explored here, and am looking forward to what samueldr, Dan, and the rest of the community will dream up over the next few years!

Back to netboot

Let’s talk about netboot again. These are things I haven’t done before and that may not have generic practical implementations yet, so take these with a grain of salt.

Serving a full-system initramfs is an easy way to get your NixOS on a machine, providing it has enough RAM and/or swap, but it’s certainly not the most efficient. Classical netboot setups only serve a small initramfs containing the network drivers and configuration for mounting a network filesystem as the root. This is, of course, also possible with NixOS! Certain properties of Nix make it possible to do this much more efficiently as well. For instance, the Nix store is practically append-only: once a path is there, it will only change in very unusual circumstances, and even then should be functionally equivalent.

This means that store paths can be cached by clients indefinitely. This would combine the flexibility and central manageability of classic netboot setups with the performance of a local installation.

There’s a lot of room for creativity here. Since store paths are immutable and can be signed, they can be obtained safely from untrusted sources. This makes peer-to-peer distribution possible, meaning that the netboot server can be provisioned far more economically without impacting performance.

Conclusion

A lot of operating systems can boot in these ways. What I think is fairly unique to NixOS is that because everything is pulled together from declarative config into an immutable package, it’s much easier to do all these things, and reuse the same pieces of config in multiple boot scenarios.

You can take the NixOS configuration running on your laptop, factor out any bits that are specific to the hardware, and get your laptop’s configuration running in any of these environments in a matter of minutes.

You can build installer images with preconfigured WiFi and SSH so that you can install on hardware remotely; you can put SuperTuxKart and OpenArena in for geeky open-source LAN parties. A few years ago, I generated NixOS images with a patched version of another open-source game to allow my Windows-using friends to play with some of the bugs fixed.

The possibilities are endless! The nixos-generators project pulls several of these options together into one convenient command, and is a great way to get started playing with them. You can find more related resources on the links page I set up to accompany the talk.

You can probably tell I’m quite excited about this. If you’re excited about it too, I’d love to hear if you implement the peer-to-peer-super-cached netboot setup, or if you come up with and implement more exotic ideas. You can find me on Matrix at @linus:schreibt.jetzt, or drop me an email at the equivalent address.